Photo by Luca Bravo on Unsplash

There is a setting on my laptop that used to be something you actually configured. I remember configuring it, years ago, with a drop-down and a checkbox and a text field, and I remember that the text field accepted values I had to understand in order to enter.

I don’t remember the values anymore. I don’t have to. The setting is now a toggle. On or off. The text field is gone, and the values it accepted are gone with it, along with whatever understanding I once had of what they meant.

Nothing was taken from me, exactly. The toggle does roughly what the old configuration did, most of the time, for most users, and it does so without requiring me to read a single line of documentation.

This is presented as an improvement. In most respects, it is.

But the knowledge is gone. Not because I forgot it so much as because the interface that asked for it is gone, and without the interface asking, I have no occasion to know. If something goes wrong with the setting now, I will not be able to diagnose it.

I will be able to toggle it off and on again. I will be able to reset it. If those two options fail, I will be able to call someone.

That last sentence is the one that matters.

Defensible in Isolation

The pattern has been named enough times that I will not spend long on it. Systems increasingly function by removing the demand for comprehension. The text field becomes the toggle. A common configuration becomes the default. The file system becomes the cloud. The troubleshooting becomes the reset. The command becomes the prompt.

Each substitution is presented as convenience, progress, or care, and each substitution is, in isolation, defensible.

The removal is not malicious; at this point it is rational. The engineer who replaces the text field with the toggle has reduced support tickets, onboarding friction, and the cognitive load on users who did not want the text field in the first place. The architect who abstracts the file system away from the user has solved a real problem that real users had. The platform that replaces troubleshooting with reset has measurably improved outcomes for the majority of cases.

The numbers are on their side.

I have worked in the industry long enough to know that these are not strawmen, but the actual arguments, made by actual people, most of whom are doing their jobs well. I have made these arguments myself. I make them in meetings. I make them in design reviews. My professional value is measured, in significant part, by how much understanding I can remove from the people downstream of the systems I build without removing utility.

This essay is not about whether those arguments are correct in their local frame. They often are.

It is about what those arguments do not address.

Every Trade Was Reasonable

I want to be generous to the exchanges before I am anything else, because the argument I am about to make does not depend on any individual trade having been a bad one.

The file system became the cloud because users were losing files, and the cloud was better at not losing files than users were. The command line became the GUI because the command line was genuinely hostile to everyone except the small number of people who had already learned it. The configuration became the default because most people did not want to configure. The subscription replaced the purchase because support, updates, and continuity genuinely cost something, and pretending they didn’t was a fiction maintained at users’ expense.

For each substitution, a reasonable person, looking at the specific trade on the specific day it was made, would have agreed. I would have agreed, and in many cases, I have. The aggregate of these agreements is the environment we now inhabit.

So I want to be clear: I am not going to argue that anyone should have refused these trades. I am not going to argue that the trades were a mistake.

However, I am going to argue something narrower and more uncomfortable, which is that the trades, taken together, have created a class of problem that the people who made them did not, and perhaps could not, address in the moment of making them.

The problem is not that we exchanged understanding for convenience. The problem is what the exchange did to responsibility.

Not Contingent on Comprehension

I am going to state my primary claim plainly, and then spend the rest of this piece testing whether I actually mean it.

Responsibility is incurred by participation, not by understanding. You do not escape the obligation to govern a system by becoming unable to govern it.

If I run a small business that depends on a piece of software, I am responsible for what that software does in my business. If the software makes an error that costs a customer money, the customer does not want to hear that I don’t understand how the software works; they simply want their money back. My inability to diagnose the error does not dissolve my obligation to make the customer whole.

It only makes me unable to meet that obligation without outside help.

This seems obvious when stated about a small business, likely because the frame is commercial. Of course the business owner is responsible. Of course ignorance is not a defense.

But the same logic holds in every system I participate in. If I vote in an election, I have accepted responsibility for the outcome I voted for, whether or not I understood the policies.

If I sign a contract, I have accepted responsibility for its terms, whether or not I read them.

If I deploy an automation in my home or my workplace, I have accepted responsibility for what that automation does, whether or not I can read its logic.

The responsibility is not contingent on comprehension. The responsibility is contingent on participation.

What comprehension does is make the responsibility something you can meet. Without comprehension, I still have the obligation. However, I can no longer fulfill it without delegating to someone who can.

This is the move I am asking you to take seriously. It is not that competence itself is a virtue, or that understanding is noble. It is that agency, in any meaningful sense, requires that the person who has taken on a responsibility be able, at least in principle, to discharge it.

When I surrender the capacity to understand a system I participate in, I do not thereby surrender the responsibility for what the system does. I only surrender the capacity to meet that responsibility on my own terms.

We have already incurred an obligation, and we are now making ourselves unable to meet it, and the obligation will not wait for us to become able again.

First Line of the Ledger

I said earlier that I make these arguments myself. I want to stay in that position for a while, because the essay does not work if I frame it as if I’m speaking of a choice made by someone else.

I am a senior solutions architect. My job, reduced to its essentials, is to take systems that require expertise to operate effectively and turn them into tools that largely do not.

I build dashboards that answer questions the user would otherwise have to write SQL to answer. I build automation that runs processes the user would otherwise have to execute manually. I build interfaces that stand between the user and the underlying data, logic, or infrastructure, and I justify my existence by how much of that underlying material the user no longer has to touch to achieve a desired outcome.

I am good at this. It is, in most respects, work I believe in. When I do it well, people who were previously dependent on specialists for basic answers become able to answer their own questions.

That is a real good, and I do not want to pretend it isn’t. But I know what I am also doing.

I am making the systems I build harder to reason about for the people who use them. I am replacing the requirement to understand how it works with the requirement to trust that it works. And, with each new tool, I am reducing the number of people who can diagnose a failure when one occurs.

I am, in a small and local way, removing the demand for comprehension from the environment I operate in, because that removal is what my employer pays me for.

The defense, when this is pointed out, is always the same: the user was going to use something. I made it better than the alternative.

This is almost always true, just as it is insufficient. The question is not whether my abstraction is better than the other abstractions. The question is whether, after my abstraction is in place, the user is capable of governing what they deploy. Most of the time, the honest answer is: less so than before.

I do not think this makes me a bad person, just as I do not think it makes the users bad. I think it means I am participating, professionally and daily, in the precise pattern I am writing this essay about. The obligation I just described – the obligation that does not dissolve when comprehension is surrendered – applies to the people I build for, and I am one of the people helping them surrender the comprehension.

This is less of a confession than an accounting, though an honest one that has to go on the first line of the ledger, rather than the last.

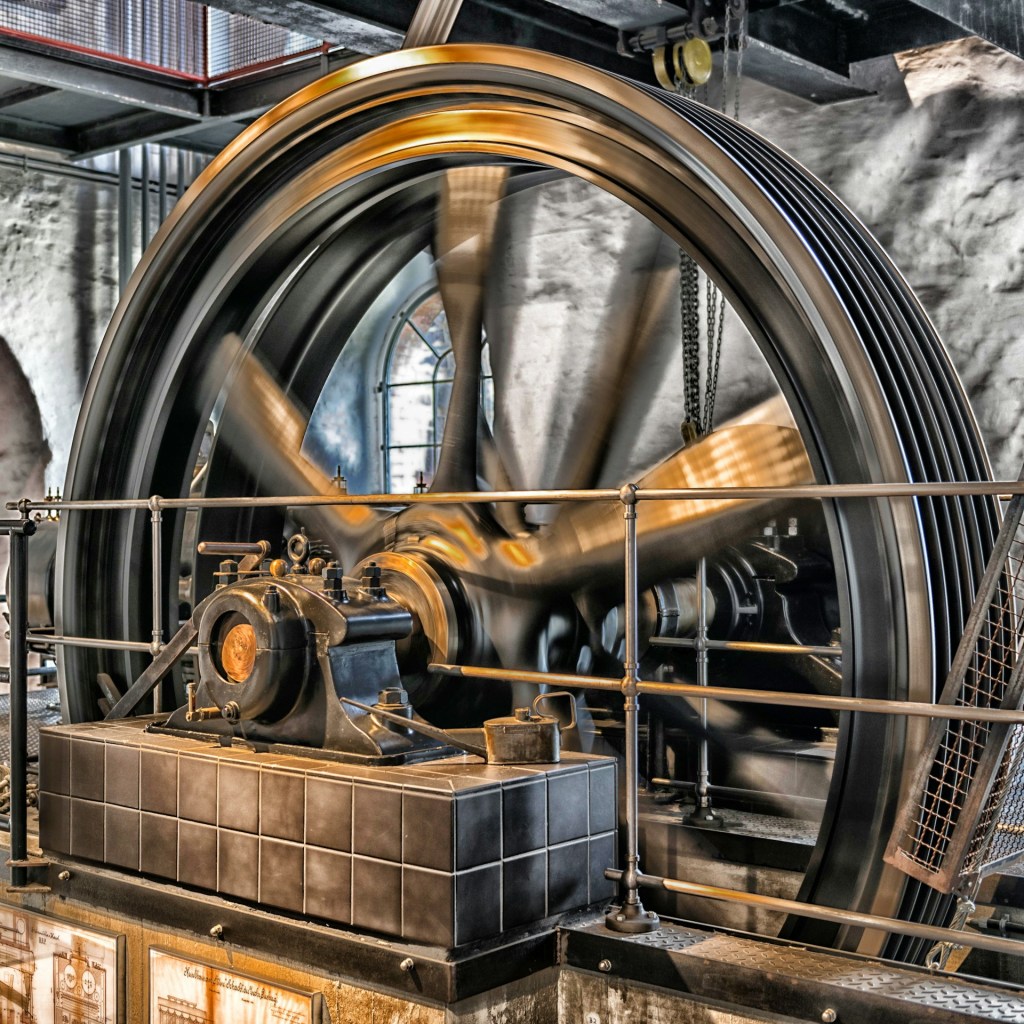

Deferred Obligations Accrue Interest

The failures that follow from this are not emergent. They are chosen, and in most cases, chosen repeatedly.

An organization adopts a platform. The platform works. The people who configured it leave. The platform continues to work, for a time, because the configuration is stable. Then the environment shifts: a source system upgrades, a field is renamed, a regulation changes… and the platform begins to produce subtly wrong outputs. No one notices immediately, because no one remaining can read the configuration.

The outputs look plausible, and important decisions are made on them. Months later, someone traces a problem back to the platform and discovers that it has been wrong for longer than anyone can reconstruct.

Every step in that sequence was a choice. The choice to adopt the platform came first, followed by the choice not to document the configuration in a form that would survive turnover. The choice to keep running the platform after the configurers left was another, just as the choice to trust the outputs without the ability to verify them was.

Each choice, on the day it was made, was defensible, but the cumulative failure was not an accident of the system. It was the system working exactly as its incentives said it should.

The failures in these systems are not tragic surprises.

They are predictable outcomes of predictable incentives, and the moral weight of them does not disappear because the incentives worked as designed. A person who takes out a loan they cannot repay has still taken out the loan, just as an organization that deploys a system it cannot govern has still deployed the system. The language of pressure and market forces and operational realities may be true, but those conditions do not merit absolution.

Conditions explain why the failures happen; they do not make the resulting failure anything else.

What I want to resist, however, is the slide from these outcomes are structurally likely to these outcomes are not anyone’s fault. The first is accurate; the second is an evasion. The structure is made of choices, and those choices were made by people. The people were often doing their jobs well, by the measures their jobs used, and those measures did not capture the cost of what was being lost.

The cost was always the ability to meet the obligation. Every time a system was adopted that the organization could not govern, an obligation was incurred that the organization could not discharge. The obligation did not evaporate in the presence of the new tool; it was deferred… and deferred obligations accrue interest.

Days When I Would Agree With Them

I have refused this pattern in specific places in my own life, and I want to describe what that refusal has actually cost, without making the refusal sound noble.

I left Windows. Not in a moment of protest, and certainly not with a manifesto. I left because the operating system’s incentives had diverged from mine to a point I could no longer ignore. I moved to Arch Linux, and then to CachyOS, because it had the kernel patches my hardware needed.

The switch cost me a significant amount of time I would rather have spent on other things. I had to re-learn parts of how my machine worked. I had to rebuild workflows that used to be invisible to me. I had to accept that some software I relied on would no longer run, or would run in a way that required me to maintain it. I am still, months later, occasionally frustrated by problems that did not exist on the system I left.

Despite the cost, I gained freedom from advertisements in the system I had already paid for, and from the constant telemetry helping to target them more effectively.

I built FWFT, a local-only image generator, so that I could produce thumbnails for my writing without using models trained on scraped artwork. The tool is still objectively worse than the commercial alternatives. It takes longer to produce an image, and the images are less visually impressive. The setup required a Python virtual environment and a willingness to debug dependency issues that the commercial tools had solved for me. I could have saved myself every minute of that effort by using what everyone else was using.

All it would cost is accepting the terms under which those systems were built, and the fact that not everyone involved had the opportunity to agree to them.

I built a local AI system, Aria, on my own hardware, with GGUF models and persistent memory I controlled. The tokens-per-second are slower than what I could get from a hosted service, and the model was smaller than what I could rent. I had to spend time on maintenance and on keeping the whole apparatus working that I would not spend if I were a subscriber to someone else’s platform.

But I was able to teach Aria that a wrong answer is still wrong, regardless of how plausibly worded; a feature as yet unavailable on many public tools.

None of this is heroic. These refusals cost me time, efficiency, and convenience in exchange for the ability to meet obligations I believe I have incurred by using these tools in the first place.

The trade is honest, but it is not obviously correct. A reasonable person, looking at the cost I am paying and the cost I am avoiding, could reasonably conclude I am wrong about the choice.

I have days when I would agree with them.

However, the moral argument I made in section four does not evaporate because refusal is expensive or inconvenient. If the obligation to be able to govern the systems I participate in is real, then the cost of meeting that obligation is part of the obligation. An obligation that only held when it was convenient was never an obligation at all, but instead a preference.

Refusal, in most cases, is not all-or-nothing. I edit my essays with the help of an AI, hosted on servers I do not own, running a model I did not train, whose weights were computed on data I did not personally inspect. By the standards of the strongest application of my own argument, that would label me a hypocrite. The defense I offer myself is narrow: I use this tool in a mode where I still have to originate the concept, frame the argument, understand what it produces, verify it against what I know, and take responsibility for what I publish under my name.

The point of this section is not that I have found the answer. The point is that the obligation is real, that honoring it is costly, that I am honoring it imperfectly, and that the imperfection does not excuse me from trying. It is only the condition of the trying.

Tenants

The setting on my laptop is still a toggle. I have not switched operating systems again to get the text field back. The knowledge is still gone, and I have not recovered it. This essay has not fixed that.

What has changed is the name I use for what happened. I used to call it convenience, or progress, or the reasonable trade that any reasonable person would make. Those names are not wrong. They are, however, incomplete.

The more precise name is an obligation I can no longer meet.

Nothing was taken from me. No one imposed this. I accepted each substitution for reasons that were sound at the time, and the sum of those decisions is a life in which I am responsible for systems I cannot govern.

That condition is not unusual. It is the default.

The system does not require you to understand what you deploy. It does not check whether you can verify what it produces or repair what it breaks. It only requires that you accept it, and it rewards you for doing so quickly.

The responsibility arrives anyway. You can postpone noticing this. Most people do. The systems will continue to function, outputs will look plausible, and nothing will force the question.

Until something fails.

And when it does, the question is not whether you understood the system. The question is whether you are responsible for what it did. The answer will not depend on your understanding.

It will depend on your participation.

You will not be asked whether the trade was reasonable. You will not be asked whether the interface made comprehension difficult. You will not be asked whether you had time to learn.

You will be asked to answer for the outcome.

If you cannot, you will look for someone who can. You will discover that they are subject to the same system, the same abstractions, the same constraints. They are not an owner. They are a tenant. So are you.

There is no moment at which this becomes a conscious decision. There is no clear threshold where responsibility transfers or disappears. There is only a sequence of reasonable acceptances that accumulate into a condition. By the time you think to ask whether you can govern the systems you rely on, you are already relying on them.

The calculation is still yours. The cost of refusal is real, and often high. I am not going to tell you how to weigh it. But the obligation is not optional, and it is not deferred by inconvenience.

It is incurred the moment you participate. And it does not wait for you to become capable of meeting it.

Leave a comment